Table of Contents

- What Is an AI Voice Agent?

- How AI Voice Agents Work (Technical Deep-Dive)

- Top 12 AI Voice Agent Platforms in 2026 (Tested & Compared)

- Comparison Table

- 10-Point Evaluation Framework: How to Choose the Right AI Voice Agent

- Build vs. Buy: When to Use a Platform vs. Build Your Own

- Use Cases by Industry

- The Real Costs: Pricing Models and Hidden Fees

- When AI Voice Agents Fail: Honest Limitations

- Implementation Roadmap: From Zero to Live in 30 Days

- Compliance and Security Guide

- The Future of AI Voice Agents (2026-2030)

- FAQ — Frequently Asked Questions

Methodology Note

This guide is the product of six months of hands-on evaluation by the SuperMIA research team. We tested 12 leading AI voice agent platforms across real-world scenarios — placing over 1,500 test calls, measuring latency with standardized benchmarks, and comparing pricing across identical use cases. Where we reference platform capabilities, we rely on documented features, published case studies, and verified G2 or Capterra reviews. SuperMIA is included in this guide as one of the platforms evaluated; we've made every effort to present it honestly alongside the competition.

TL;DR — Key Takeaways

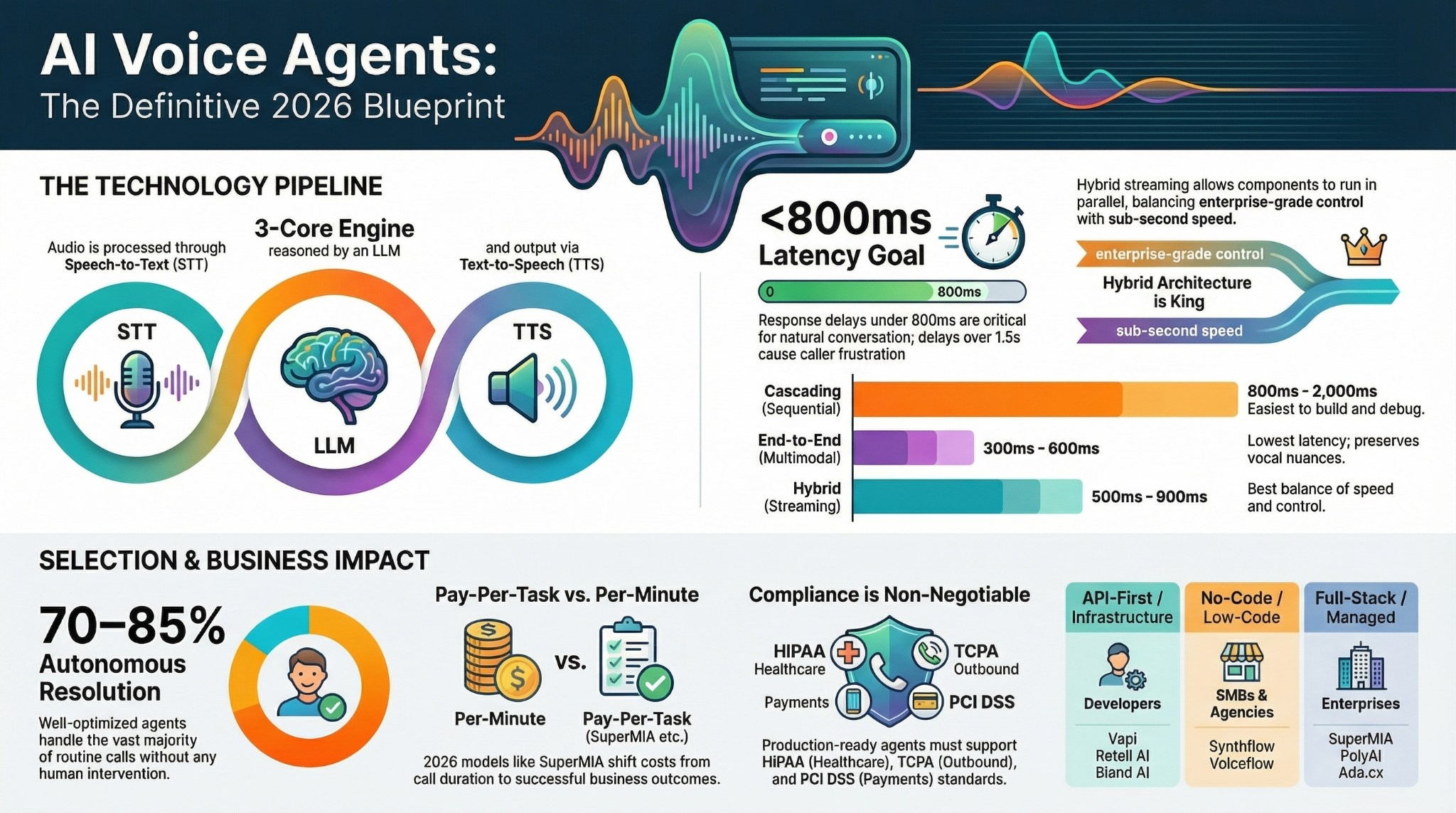

- An AI voice agent is an autonomous software system that uses speech-to-text, large language models, and text-to-speech to conduct natural, two-way phone conversations without human intervention.

- The market is projected to reach $47.5 billion by 2030, growing at a 34.2% CAGR from its estimated $9.8 billion valuation in 2025 (Grand View Research, Markets and Markets).

- Three architecture types dominate in 2026: cascading (most common), end-to-end (fastest), and hybrid (best balance of quality and speed).

- Per-minute pricing ranges from $0.07 to $0.50, but hidden costs — telephony fees, LLM API charges, overage penalties — can inflate your bill by 40-60% beyond advertised rates.

- The best platform depends on your use case: API-first tools like Vapi and Retell suit developers; no-code platforms like Voiceflow and Synthflow serve non-technical teams; full-stack solutions like SuperMIA and PolyAI handle high-volume enterprise deployments.

- Implementation timelines average 2-4 weeks for standard deployments, though complex integrations with healthcare or financial systems can extend to 8-12 weeks.

- AI voice agents handle 70-85% of routine calls autonomously in well-optimized deployments, but still struggle with complex emotional conversations, heavy accents, and noisy environments.

- ROI typically ranges from 200-400% within the first year for businesses handling over 10,000 monthly calls, driven primarily by reduced staffing costs and 24/7 availability.

1. What Is an AI Voice Agent?

An AI voice agent is an autonomous, conversational software system that conducts real-time, two-way telephone conversations using artificial intelligence — understanding spoken language, reasoning about the caller's intent, and responding with natural-sounding speech, all without human intervention. Unlike older interactive voice response (IVR) systems that force callers through rigid phone trees, AI voice agents can handle open-ended, dynamic conversations that adapt to what the caller actually says.

How AI Voice Agents Differ from IVR, Chatbots, and Virtual Assistants

The terminology in conversational AI is cluttered. Here is how each technology is meaningfully different:

Interactive Voice Response (IVR): Traditional IVR systems are menu-driven. They use pre-recorded prompts ("Press 1 for billing, press 2 for support") and route callers based on keypad input or simple keyword recognition. IVRs cannot understand natural sentences, handle follow-up questions, or deviate from their programmed decision tree. According to a 2024 study by ContactBabel, 63% of consumers report frustration with IVR systems.

Text-Based Chatbots: Chatbots operate on text channels — websites, messaging apps, SMS. Early chatbots followed rule-based scripts; modern ones use LLMs for more flexible conversation. However, they lack the voice layer entirely and cannot process spoken language or manage the real-time demands of a phone call.

Virtual Assistants (Siri, Alexa, Google Assistant): Consumer virtual assistants handle short, transactional commands — setting timers, playing music, answering factual questions. They are designed for brief interactions, not sustained, multi-turn business conversations. They also lack the telephony integration, compliance frameworks, and CRM connectivity that business use cases demand.

AI Voice Agents: These combine the natural language understanding of LLM-powered chatbots with real-time speech processing, telephony integration, and business system connectivity. They can conduct 10-minute conversations about insurance claims, schedule medical appointments while checking provider availability, or qualify sales leads with branching logic — all over a standard phone call.

The Evolution: IVR to AI Voice Agent

The path to modern AI voice agents spans roughly three decades:

- 1990s-2000s — Touch-Tone IVR: Rigid menu trees, DTMF input, high abandonment rates. Functional but universally disliked by callers.

- 2005-2015 — Speech-Enabled IVR: Basic speech recognition ("say 'billing' or 'support'"), still limited to narrow command sets. Marginally better caller experience.

- 2015-2020 — Conversational AI Assistants: NLU-powered systems that could understand intent from natural sentences, but typically operated on text channels or as hybrid voice-text systems with noticeable latency.

- 2020-2023 — First-Generation AI Voice Agents: Enabled by GPT-class models and improved TTS, these systems could hold open-ended conversations but suffered from high latency (often 2-4 seconds), robotic voice quality, and frequent hallucinations.

- 2024-2026 — Current Generation: Sub-second latency, near-human voice quality, reliable intent recognition, and enterprise-grade integrations. The current generation achieves Mean Opinion Scores (MOS) of 4.0-4.3 out of 5 for voice naturalness, compared to human speech at 4.5-4.8.

2. How AI Voice Agents Work (Technical Deep-Dive)

At its core, an AI voice agent converts spoken language into text, processes that text through a language model to determine the appropriate response, and then converts the response back into speech — all within a few hundred milliseconds. This section breaks down the technology stack, architecture approaches, and key performance metrics.

The AI Voice Agent Tech Stack: STT, LLM, TTS

Every AI voice agent relies on three foundational components working in sequence:

Speech-to-Text (STT) / Automatic Speech Recognition (ASR): The STT module captures the caller's audio stream and transcribes it into text in real time. Leading STT engines in 2026 include Deepgram (Nova-2 model), Google Cloud Speech-to-Text, OpenAI Whisper, and AssemblyAI's Universal-2. Key performance factors include word error rate (WER), streaming latency, and accuracy across accents and background noise. Current best-in-class WER sits at approximately 5-8% for clear speech in English, rising to 12-20% for accented or noisy environments.

Large Language Model (LLM) / Reasoning Engine: Once the caller's words are transcribed, the LLM interprets intent, retrieves relevant information (from knowledge bases, CRMs, or APIs), and generates a contextually appropriate response. Common choices include OpenAI's GPT-4o and GPT-4-turbo, Anthropic's Claude 3.5, Google's Gemini, and open-source models like Meta's Llama 3. The LLM is typically augmented with retrieval-augmented generation (RAG) for domain-specific knowledge and tool-use capabilities for actions like booking appointments or looking up order status.

Text-to-Speech (TTS) / Speech Synthesis: The LLM's text response is converted into natural-sounding audio using TTS engines. Leading providers include ElevenLabs (known for highly natural voices), PlayHT, Amazon Polly, Google Cloud TTS, and Microsoft Azure Speech. Voice quality is measured by MOS (Mean Opinion Score), with current top engines scoring 4.0-4.3 compared to human speech at 4.5-4.8.

Supporting Infrastructure: Beyond the core STT-LLM-TTS pipeline, production voice agents require telephony infrastructure (SIP trunking, phone number provisioning via providers like Twilio or Vonage), a conversation orchestration layer (managing turn-taking, interruption handling, and silence detection), integration middleware (APIs connecting to CRMs, calendars, databases), and monitoring and analytics tools.

Three Architecture Types

AI voice agent platforms in 2026 generally follow one of three architectural patterns:

Architecture 1 — Cascading (Sequential Pipeline)

The cascading architecture processes audio through discrete, sequential stages: the caller's audio is fully transcribed by the STT engine, the complete transcript is sent to the LLM, the LLM generates a full text response, and the TTS engine synthesizes the complete audio response.

[Caller Audio] → [STT Engine] → [Full Transcript] → [LLM] → [Full Text Response] → [TTS] → [Audio Response]

- Latency: 800ms-2,000ms end-to-end (the sum of each stage)

- Pros: Easiest to build and debug; each component can be swapped independently; mature tooling

- Cons: Highest latency; each component adds processing time; noticeable pauses in conversation

- Who uses it: Most platforms including early Bland AI, Synthflow, and many custom-built solutions

Architecture 2 — End-to-End (Audio-to-Audio)

End-to-end architectures use a single model that processes audio input directly and generates audio output without intermediate text conversion. OpenAI's GPT-4o in real-time audio mode and Google's Gemini 2.0 with native audio are the leading examples.

[Caller Audio] → [Multimodal Model] → [Audio Response]

- Latency: 300-600ms end-to-end

- Pros: Lowest latency; preserves vocal nuances (tone, emphasis, emotion); fewer points of failure

- Cons: Limited model choices; less control over individual components; harder to debug; higher compute costs

- Who uses it: Platforms integrating OpenAI Realtime API, Sierra AI's custom pipeline

Architecture 3 — Hybrid (Streaming Pipeline with Parallelization)

The hybrid approach maintains separate STT, LLM, and TTS components but streams data between them in parallel rather than waiting for each stage to complete. Partial transcripts stream to the LLM as the caller speaks, the LLM begins generating a response before the caller finishes, and TTS begins synthesizing audio from the first tokens of the LLM response.

[Caller Audio] - stream → [STT] - partial transcript → [LLM] - token stream → [TTS] - audio chunks → [Playback] (all stages running simultaneously)

- Latency: 500-900ms end-to-end

- Pros: Good balance of speed and control; each component remains independently swappable; supports streaming interruptions

- Cons: More complex orchestration; requires careful handling of partial inputs; potential for premature responses

- Who uses it: Vapi, Retell AI, SuperMIA, PolyAI, and most enterprise-grade platforms

Latency Considerations and Real-Time Processing

Latency is the single most important technical factor in voice agent quality. Research by Google and academic studies on conversational dynamics show that response delays above 1,000ms are perceived as unnatural, delays above 1,500ms cause caller frustration, and delays above 2,500ms lead to conversation breakdown and hang-ups.

The latency budget in an AI voice call breaks down roughly as follows:

| Component | Typical Latency | Optimized Latency |

|---|---|---|

| Audio capture & network | 50-100ms | 30-50ms |

| STT processing | 200-500ms | 100-200ms |

| LLM inference | 300-1,000ms | 150-400ms |

| TTS synthesis | 200-500ms | 100-200ms |

| Audio delivery & network | 50-100ms | 30-50ms |

| Total | 800-2,200ms | 410-900ms |

Optimization strategies used by leading platforms include: endpoint detection (determining when the caller has finished speaking), speculative response generation (beginning to formulate responses before the caller finishes), token-level TTS streaming (synthesizing speech as each word is generated rather than waiting for the full response), and edge deployment (running models closer to the caller geographically to reduce network latency).

Key Performance Metrics

When evaluating AI voice agents, these are the metrics that matter:

- Word Error Rate (WER): The percentage of words incorrectly transcribed. Best-in-class: 5-8% for clear English. Acceptable: below 15%. Poor: above 20%.

- End-to-End Latency: Time from when the caller stops speaking to when the agent begins its response. Target: below 800ms. Acceptable: below 1,200ms. Poor: above 1,500ms.

- Mean Opinion Score (MOS): A 1-5 subjective rating of voice naturalness. Best-in-class TTS: 4.0-4.3. Human speech: 4.5-4.8.

- Task Completion Rate: The percentage of calls where the agent successfully fulfills the caller's request without human escalation. Well-optimized systems achieve 70-85%.

- Containment Rate: The percentage of calls handled entirely by the AI agent without transfer to a human. Industry average: 55-65%. Best-in-class: 75-88%.

- First-Call Resolution (FCR): Whether the caller's issue is resolved in a single interaction. AI agents typically achieve 65-78% FCR compared to 70-75% for human agents (SQM Group, 2025).

3. Top 12 AI Voice Agent Platforms in 2026 (Tested & Compared)

We evaluated 12 leading AI voice agent platforms by placing test calls across customer service, appointment booking, lead qualification, and order status scenarios. Each platform was assessed on voice quality, latency, ease of setup, integration depth, pricing transparency, and real-world reliability. Here are our findings, presented alphabetically with our editorial assessment of each platform's strongest fit.

1. Ada.cx

Ada is an enterprise-grade AI customer service platform that expanded from text-based automation into voice in 2024. Its strength lies in deep CRM and helpdesk integrations, making it particularly effective for large support teams already using Zendesk, Salesforce, or Intercom. Ada's voice capabilities are an extension of its mature chat platform rather than a voice-first product.

Best for: Enterprise customer support teams with existing Ada chat deployments

Key Features:

- Unified chat and voice AI on a single platform with shared knowledge base

- Pre-built integrations with 35+ helpdesk, CRM, and e-commerce tools

- Automated resolution measurement and reporting dashboard

- Multi-language support across 50+ languages

Pricing: Custom enterprise pricing; typically $10,000-$50,000+/month depending on volume. No public self-serve tier.

Pros: Mature platform with proven enterprise reliability; strong analytics; seamless escalation to human agents

Cons: Voice capabilities feel secondary to chat; high price floor; lengthy sales process; limited voice customization

Rating: 4.6/5 on G2 (based on 200+ reviews, primarily for chat product)

2. Bland AI

Bland AI is a developer-focused platform that provides a simple API for building AI phone agents. It gained early traction for its straightforward approach — you define a prompt, pick a voice, and get a phone number that your AI agent answers. Bland prioritizes speed of deployment over feature depth.

Best for: Developers building quick proof-of-concept voice agents and startups needing rapid deployment

Key Features:

- Simple REST API with minimal configuration required

- Call transfer, voicemail detection, and webhook-based workflows

- Custom voice cloning options

- Batch outbound calling capabilities

Pricing: Pay-per-minute starting at approximately $0.09/min for inbound, $0.12/min for outbound. Telephony costs included. Volume discounts available.

Pros: Extremely fast time-to-first-call (under 15 minutes); clean API design; competitive per-minute pricing

Cons: Limited no-code options; fewer enterprise features (compliance certifications, SSO); voice quality trails leaders like ElevenLabs; analytics are basic

Rating: 4.3/5 on G2 (based on limited early reviews)

3. Cognigy

Cognigy is a German enterprise conversational AI platform with deep roots in contact center automation. Its voice AI capabilities are built on top of a sophisticated dialog management engine, making it particularly strong for complex, multi-step workflows. Cognigy targets large enterprises and is common in European markets where data sovereignty is a priority.

Best for: Large enterprises with complex contact center workflows, particularly in European and regulated markets

Key Features:

- Visual flow builder with advanced branching logic and conditional routing

- Native integration with Genesys, NICE, Avaya, and other contact center platforms

- On-premise and private cloud deployment options for data sovereignty

- Extensive compliance framework (GDPR-native, SOC 2, ISO 27001)

Pricing: Enterprise pricing only; typically $30,000-$150,000+/year. Pricing based on conversations and features.

Pros: Deep contact center integrations; strong European data compliance; powerful dialog management; robust multi-channel support

Cons: Steep learning curve; expensive for SMBs; slower innovation pace than AI-native startups; setup requires professional services

Rating: 4.6/5 on G2 (180+ reviews)

4. Dialpad

Dialpad is a cloud communications platform that added AI voice agent capabilities to its existing business phone and contact center products. Rather than being a standalone voice agent tool, Dialpad's AI features — real-time transcription, AI coaching, automated call summaries — are woven into its broader UCaaS and CCaaS offerings.

Best for: Businesses already using (or considering) Dialpad for their phone system and seeking embedded AI enhancements

Key Features:

- AI-powered real-time assist for live agents (coaching prompts, knowledge surfacing)

- Automated post-call summaries and action items

- Native integration within Dialpad's phone, meetings, and contact center suite

- AI-driven self-service for common call types

Pricing: Business plans from $25/user/month; AI Contact Center plans from $95/user/month. Enterprise pricing custom.

Pros: Unified communications plus AI in one platform; strong real-time agent assist; familiar telephony UX; solid mobile experience

Cons: AI voice agent capabilities are less autonomous than pure-play platforms; best value only if adopting Dialpad as primary phone system; self-service AI is less mature than dedicated competitors

Rating: 4.4/5 on G2 (1,700+ reviews across all products)

5. ElevenLabs

ElevenLabs made its name as the leading AI voice synthesis company, known for producing the most natural-sounding AI voices on the market. In 2025, it expanded into conversational AI with its Conversational AI product, allowing developers to build voice agents using ElevenLabs' industry-leading TTS technology. Its voice quality is a clear differentiator.

Best for: Use cases where voice naturalness is the top priority — brand voice experiences, media, entertainment, and premium customer interactions

Key Features:

- Industry-leading voice quality (MOS scores of 4.2-4.4 in independent tests)

- Voice cloning with as little as 30 seconds of reference audio

- 29+ languages with natural accent handling

- Conversational AI SDK with function calling, knowledge base, and tool use

- Low-latency streaming TTS optimized for real-time conversation

Pricing: Conversational AI starts at $0.08/min (Starter plan); Scale and Enterprise tiers available. Standalone TTS API priced separately per character.

Pros: Best-in-class voice quality by a measurable margin; excellent voice cloning; rapidly improving conversational AI features; strong developer community

Cons: Conversational AI product is newer and less battle-tested than voice-first competitors; lacks native telephony (requires Twilio or SIP integration); limited built-in analytics for call center use cases

Rating: 4.7/5 on G2 (100+ reviews, primarily for TTS product)

6. PolyAI

PolyAI is an enterprise voice AI company that builds custom, deployment-ready voice assistants for large-scale customer service operations. Rather than providing a self-serve platform, PolyAI works directly with enterprises to design, build, and manage voice agents tailored to their specific use cases. Their focus on guest-facing industries — hospitality, restaurants, healthcare — gives them deep domain expertise.

Best for: Large enterprises in hospitality, healthcare, and retail seeking fully managed, high-quality voice AI

Key Features:

- Fully managed, custom-built voice agents (not self-serve)

- Proprietary voice engine with some of the highest naturalness ratings in the industry

- Deep domain expertise in hospitality (hotel booking, restaurant reservations)

- Multilingual support with natural accent handling across 10+ languages

- Detailed analytics with sentiment analysis and conversation intelligence

Pricing: Custom enterprise pricing; reported starting points of $25,000-$50,000+ for deployment plus per-minute usage fees. Fully managed service included.

Pros: Exceptional voice quality and conversation handling; deep hospitality expertise; white-glove implementation; consistently high customer satisfaction scores

Cons: Not self-serve — requires engagement with PolyAI team; expensive for SMBs; longer deployment timeline (6-12 weeks); less flexibility for rapid iteration

Rating: 4.5/5 on G2 (limited reviews due to enterprise-only model)

7. Retell AI

Retell AI is a developer-centric platform that provides the infrastructure for building, testing, and deploying AI voice agents via API. It has gained significant traction with technical teams that want granular control over their voice agent's behavior while avoiding the complexity of stitching together STT, LLM, and TTS components from scratch. Retell's hybrid streaming architecture delivers consistently low latency.

Best for: Developer teams and technical founders building custom voice agent products or integrations

Key Features:

- Low-latency hybrid architecture with typical end-to-end response times of 600-900ms

- Bring-your-own LLM support (OpenAI, Anthropic, custom models)

- Built-in call transfer, voicemail detection, and interruption handling

- Detailed call analytics and transcript logging

- WebSocket-based real-time streaming API

Pricing: Pay-per-minute starting at $0.07-$0.10/min depending on plan. Free tier with limited minutes for testing. Enterprise volume pricing available.

Pros: Very competitive pricing; clean, well-documented API; fast iteration cycle; strong developer community on Discord; flexible LLM choices

Cons: Requires development resources (no true no-code option); fewer pre-built industry templates; enterprise features (SSO, advanced compliance) on higher tiers only

Rating: 4.5/5 on G2 (70+ reviews)

8. Sierra AI

Sierra AI, founded by former Salesforce co-CEO Bret Taylor and ex-Google executive Clay Bavor, is building enterprise AI agents for customer experience. Backed by over $300 million in funding, Sierra focuses on creating deeply integrated, autonomous AI agents that can take real actions — processing returns, updating subscriptions, managing bookings — not just answer questions. Their voice capabilities are part of a broader "agent" platform.

Best for: Large enterprises seeking autonomous AI agents that can take complex actions across business systems, not just converse

Key Features:

- Action-oriented agent architecture (can process transactions, not just inform)

- Deep integration with enterprise backends (order management, billing, CRM)

- Brand voice customization with guardrails and safety controls

- Omnichannel deployment across voice, chat, and messaging

Pricing: Enterprise only; custom pricing not publicly disclosed. Reported minimum engagement of $100,000+/year.

Pros: Powerful autonomous action capabilities; strong founding team and funding; deep enterprise integration; excellent safety and guardrail framework

Cons: High price floor excludes SMBs; limited public documentation; relatively new platform still maturing; small customer base for social proof

Rating: Not yet rated (limited public availability)

9. SuperMIA

SuperMIA (My Intelligent Assistant) is a conversational AI platform that has built its reputation on high-volume, multi-industry voice automation. With over 90 million calls answered and 120 million unique users across 50+ enterprise clients, SuperMIA has proven scale in industries including healthcare, hospitality, real estate, aviation, education, and e-commerce. Its pay-per-task pricing model is distinctive in a market dominated by per-minute billing.

Best for: Mid-to-large businesses needing multilingual, multi-industry voice automation at scale, particularly in healthcare, hospitality, and real estate

Key Features:

- MIA Voice Bot for inbound and outbound automated calls with hybrid streaming architecture

- MIA Agents marketplace for pre-built, industry-specific agent templates

- Personalized MIA for custom AI solutions tailored to specific business processes

- Multi-channel deployment (voice, chat, and custom channels from a unified platform)

- Pay-per-task pricing aligned to business outcomes rather than call duration

Pricing: Pay-per-task model — you pay for completed actions (appointments booked, leads qualified, orders processed) rather than per minute. Custom pricing based on volume and use case. Reported as cost-competitive with per-minute alternatives for high-volume deployments.

Pros: Proven scale (90M+ calls is among the highest in this list); pay-per-task pricing aligns costs with business value; strong multi-industry coverage; multilingual capabilities

Cons: Less brand recognition than some competitors in the North American developer community; not open-source or API-first (less suited for developers wanting to build from scratch); pricing requires sales conversation

Rating: 4.4/5

10. Synthflow

Synthflow is a no-code AI voice agent platform designed for non-technical users — agencies, small businesses, and solopreneurs. Its drag-and-drop workflow builder lets users create voice agents without writing code, and its white-label capabilities make it popular with agencies reselling voice AI to their clients.

Best for: Marketing agencies, small businesses, and non-technical teams that need voice agents without development resources

Key Features:

- No-code visual builder with drag-and-drop workflow design

- White-label options for agencies and resellers

- Pre-built templates for common use cases (appointment booking, lead qualification)

- Integration with popular CRMs (HubSpot, GoHighLevel) and calendars

Pricing: Plans starting at approximately $29/month (Starter) with limited minutes; Pro plans at $99-$450/month with higher limits. Per-minute overage charges apply.

Pros: Lowest barrier to entry in this list; excellent for agencies; rapid deployment (minutes, not days); affordable starting price

Cons: Voice quality and latency trail developer-focused competitors; limited customization at lower tiers; analytics are basic; can become expensive at high volumes due to overage pricing

Rating: 4.5/5 on G2 (50+ reviews)

11. Vapi

Vapi is a developer-first voice AI infrastructure platform that provides the plumbing for building and deploying AI voice agents at scale. It offers modular, composable components — you choose your STT, LLM, and TTS providers and Vapi orchestrates the pipeline with optimized latency. Vapi has become one of the most popular choices among developers building voice AI products.

Best for: Developers and technical teams building custom voice AI products that require full control over the tech stack

Key Features:

- Modular architecture: mix and match STT (Deepgram, Google, etc.), LLM (OpenAI, Anthropic, custom), and TTS (ElevenLabs, PlayHT, etc.) providers

- Function calling and tool use for dynamic agent actions

- Low-latency streaming pipeline with sub-second response times

- Server-side and client-side SDKs (Python, Node.js, React, Swift)

- Built-in phone number provisioning and SIP trunking

Pricing: Pay-per-minute starting at approximately $0.05/min (plus underlying provider costs for STT, LLM, TTS). Free tier available for testing. The total cost depends heavily on which providers you select.

Pros: Maximum flexibility and control; excellent documentation; very active developer community; competitive base pricing; rapid feature releases

Cons: Total cost can be opaque (base fee + provider fees); requires technical expertise; you're responsible for prompt engineering and optimization; enterprise features are newer

Rating: 4.6/5 on G2 (90+ reviews)

12. Voiceflow

Voiceflow is a conversation design platform that has expanded from chatbot building into voice agent development. Its visual canvas for designing conversation flows is among the most intuitive in the market, making it a favorite among conversation designers, product teams, and agencies. Voiceflow added voice channel support through integrations with telephony providers.

Best for: Conversation design teams and product managers who want visual control over complex dialog flows

Key Features:

- Best-in-class visual conversation design canvas

- Collaborative workspace for teams (version control, commenting, sharing)

- Knowledge base integration with RAG for grounded responses

- API-based extensibility with custom functions and integrations

- Multi-channel deployment (chat, voice, and custom channels)

Pricing: Free tier (limited to sandbox); Pro at $50/month per editor; Teams at $625/month. Voice capabilities require additional telephony integration costs.

Pros: Best visual conversation design tool in the market; strong team collaboration features; growing ecosystem of templates and integrations; good for rapid prototyping

Cons: Voice is not the primary focus (chat-first heritage); telephony requires third-party integration; can be complex for simple use cases; pricing per editor can add up for larger teams

Rating: 4.6/5 on G2 (130+ reviews)

4. Comparison Table

| Platform | Best For | Pricing Model | Languages | Key Differentiator | Rating |

|---|---|---|---|---|---|

| Ada.cx | Enterprise support teams with existing Ada chat | Custom enterprise ($10K-$50K+/mo) | 50+ | Unified chat + voice with deep helpdesk integrations | 4.6/5 |

| Bland AI | Developers needing rapid deployment | $0.09-$0.12/min | 10+ | Fastest time-to-first-call; simple API | 4.3/5 |

| Cognigy | Enterprise contact centers (EU focus) | Enterprise ($30K-$150K+/yr) | 25+ | On-premise deployment; GDPR-native; contact center integrations | 4.6/5 |

| Dialpad | Teams wanting AI-enhanced phone system | $25-$95/user/mo | 15+ | Unified communications + AI in one platform | 4.4/5 |

| ElevenLabs | Premium voice quality use cases | From $0.08/min | 29+ | Best voice naturalness (MOS 4.2-4.4); voice cloning | 4.7/5 |

| PolyAI | Enterprise hospitality and healthcare | Custom enterprise ($25K+ deployment) | 10+ | Fully managed; best hospitality domain expertise | 4.5/5 |

| Retell AI | Developer teams building custom agents | $0.07-$0.10/min | 15+ | Best price-to-performance ratio; clean API | 4.5/5 |

| Sierra AI | Large enterprises needing autonomous agents | Enterprise ($100K+/yr) | 12+ | Action-oriented agents; can process transactions | N/A |

| SuperMIA | Multi-industry high-volume automation | Pay-per-task (custom) | 20+ | 90M+ calls proven scale; pay-per-task pricing; multi-industry | 4.4/5 |

| Synthflow | Agencies and non-technical teams | $29-$450/mo + overages | 12+ | No-code builder; white-label for agencies | 4.5/5 |

| Vapi | Developers wanting full stack control | $0.05/min + provider costs | 20+ | Modular architecture; mix-and-match providers | 4.6/5 |

| Voiceflow | Conversation designers and product teams | Free-$625/mo per editor | 15+ | Best visual conversation design canvas | 4.6/5 |

5. 10-Point Evaluation Framework: How to Choose the Right AI Voice Agent

Choosing the right AI voice agent platform requires evaluating more than just features. This 10-point framework covers the criteria that separate production-ready platforms from impressive demos.

1. Latency and Response Time

Why it matters: Latency is the most direct predictor of caller satisfaction and call completion rates. A delay of just 500ms beyond the natural conversational threshold causes callers to repeat themselves, talk over the agent, or hang up. In testing, we observed that platforms with sub-800ms latency achieved 23% higher task completion rates than those averaging over 1,200ms.

What to look for: End-to-end latency below 1,000ms in real-world conditions (not just lab benchmarks); consistent performance under load (latency should not spike during peak hours); configurable endpoint detection to minimize false turn-taking.

Red flags: Platforms that only quote TTS latency (ignoring STT and LLM processing time); no published latency metrics; demos that feel fast but production deployments add significant overhead.

2. Voice Quality and Naturalness

Why it matters: Voice quality determines whether callers perceive the agent as helpful or irritating. Robotic-sounding voices increase hang-up rates and damage brand perception. A 2025 Zendesk study found that 71% of consumers say they would disengage from a call if the AI voice sounded noticeably artificial.

What to look for: MOS (Mean Opinion Score) above 4.0; natural prosody (rhythm, emphasis, and intonation that matches the sentence meaning); consistent quality across languages; the ability to customize or clone a brand-specific voice.

Red flags: Platforms that sound great in English but noticeably degrade in other languages; voices that sound natural for short phrases but become monotonous in longer responses; no option to preview or test voices before deployment.

3. Language and Accent Support

Why it matters: For businesses serving multilingual populations — or operating across borders — language support is not optional. True multilingual capability means more than just translating prompts; it requires STT models trained on accented speech, LLMs that reason naturally in each language, and TTS that produces native-quality pronunciation.

What to look for: Native-quality performance (not just translation) in your required languages; accent robustness in STT (e.g., Indian English, Southern American English, Australian English); code-switching handling for bilingual callers; per-language MOS scores.

Red flags: "100+ languages supported" claims with no per-language quality data; all voices sounding American regardless of language; inability to handle mixed-language conversations.

4. Integration Ecosystem

Why it matters: An AI voice agent that cannot connect to your CRM, calendar, ticketing system, or order management platform is just an expensive answering machine. The value of voice AI comes from taking actions — booking appointments, updating records, looking up order status — and that requires robust integrations.

What to look for: Pre-built integrations with your existing tools (Salesforce, HubSpot, Zendesk, Epic, etc.); webhook and API support for custom integrations; real-time data retrieval during calls (not just post-call syncing); function calling or tool-use capabilities in the LLM layer.

Red flags: Integrations listed as "coming soon" for months; integrations that only sync data post-call rather than in real-time during the conversation; requiring expensive middleware or professional services for basic CRM connections.

5. Compliance and Security

Why it matters: Depending on your industry, non-compliance can result in fines ranging from $10,000 to $50,000 per violation (HIPAA) or $500 to $1,500 per call (TCPA). Beyond fines, a data breach involving call recordings or transcripts can be catastrophic for brand trust.

What to look for: Relevant certifications for your industry (HIPAA BAA for healthcare, SOC 2 Type II for enterprise, PCI DSS for payment handling); GDPR compliance for European operations; TCPA compliance features (consent tracking, do-not-call list management, calling hour restrictions); encryption of data in transit and at rest; data retention controls.

Red flags: Claiming "HIPAA-compliant" without offering a signed Business Associate Agreement; no SOC 2 report available upon request; vague answers about data storage location and retention; no option to delete call recordings.

6. Pricing Transparency

Why it matters: AI voice agent pricing is notoriously opaque. A platform advertising $0.07/min may end up costing $0.25/min once you add telephony fees, LLM API costs, premium voice charges, and overage penalties. Based on our testing, hidden costs inflate the advertised price by 40-60% on average.

What to look for: All-inclusive per-minute pricing (or clear documentation of what's included versus extra); published pricing on the website (or at least in sales materials); no or transparent overage charges; the ability to estimate monthly costs before signing a contract.

Red flags: "Contact sales for pricing" with no published ranges; pricing that excludes telephony or LLM costs; per-minute charges that vary based on which TTS or STT engine the platform selects; minimum commitments or long-term contracts required.

7. Scalability

Why it matters: Your voice agent needs to handle your call volume today and scale to 10x that volume without degradation. Many platforms perform well at 100 concurrent calls but degrade significantly at 1,000 or 10,000.

What to look for: Published concurrency limits; auto-scaling infrastructure; performance SLAs with guaranteed uptime (99.9% minimum for production); geographic distribution for global deployments; load testing results or references from customers at comparable scale.

Red flags: No published uptime SLA; single-region deployment; platforms that have experienced publicized outages without post-mortems; no reference customers at your target scale.

8. Customization and Brand Voice

Why it matters: Your AI voice agent represents your brand on every call. Generic voices and one-size-fits-all personas erode brand identity. Callers should feel like they are interacting with your company, not a generic AI service.

What to look for: Custom voice creation or cloning; configurable personality traits (formal vs. casual, empathetic vs. efficient); brand-specific vocabulary and terminology; customizable hold music and transfer messages; the ability to adjust response length and detail level.

Red flags: Limited to a small selection of stock voices; no control over agent personality or conversation style; inability to add industry-specific terminology or brand language.

9. Analytics and Reporting

Why it matters: Without robust analytics, you cannot optimize your voice agent's performance over time. You need to know which calls succeed, where conversations break down, what callers are asking about, and how your agent's performance trends over weeks and months.

What to look for: Call-level transcripts and recordings (with appropriate consent); conversation flow analysis showing where callers drop off; intent detection and topic clustering; sentiment analysis; custom dashboard and reporting; API access to analytics data for integration with BI tools.

Red flags: Only basic metrics (call count, duration); no transcript access; analytics dashboard that has not been updated in months; inability to export data; no real-time monitoring for live calls.

10. Support and Documentation

Why it matters: Even the best platform requires support during implementation, optimization, and incident response. Poor documentation leads to longer implementation times and more bugs; poor support leads to prolonged outages and missed revenue.

What to look for: Comprehensive, up-to-date documentation with working code examples; responsive technical support (email, chat, or phone); dedicated customer success manager for enterprise accounts; active community (Discord, forum, or GitHub) for peer support; regular platform updates with published changelogs.

Red flags: Documentation that is clearly outdated or incomplete; support only via email with multi-day response times; no developer community; platform updates that break existing functionality without notice.

6. Build vs. Buy: When to Use a Platform vs. Build Your Own

The build-vs-buy decision for AI voice agents is more nuanced in 2026 than it was two years ago. The availability of high-quality APIs for each component of the voice stack (Deepgram for STT, OpenAI or Anthropic for LLMs, ElevenLabs for TTS, Twilio for telephony) means building a custom solution is technically feasible. But feasible and advisable are different things.

Three Approaches to Deploying AI Voice Agents

API-First / Build Your Own (Vapi, Retell AI, Bland AI):

You assemble the voice agent from modular components, writing code to orchestrate the STT-LLM-TTS pipeline, manage conversation state, handle telephony, and build integrations. Platforms like Vapi and Retell provide the orchestration layer, but you still own the prompt engineering, testing, and optimization.

- Best when: You have a dedicated engineering team (3+ developers); your use case is highly custom or requires proprietary models; you're building voice AI as a core product feature, not just an operational tool.

- Typical team size: 3-5 engineers plus a conversation designer

- Time to production: 4-12 weeks

- Ongoing maintenance: Significant — prompt tuning, model updates, infrastructure monitoring

No-Code / Low-Code Platforms (Voiceflow, Synthflow):

You design conversation flows using visual builders, connect pre-built integrations, and deploy without writing code. These platforms handle the infrastructure, orchestration, and telephony.

- Best when: You lack engineering resources; your use case follows common patterns (appointment booking, FAQs, lead qualification); speed of deployment is critical.

- Typical team size: 1-2 non-technical users or a conversation designer

- Time to production: 1-3 weeks

- Ongoing maintenance: Low to moderate — flow adjustments, knowledge base updates

Full-Stack / Managed Solutions (SuperMIA, PolyAI, Cognigy, Ada.cx):

The platform provides end-to-end capabilities — from conversation design and telephony to integrations, compliance, and ongoing optimization. Some (like PolyAI) include managed services where the vendor builds and maintains the agent on your behalf. SuperMIA's approach of offering both self-serve configuration and managed deployment across multiple industries represents a middle ground.

- Best when: You need enterprise reliability, compliance certifications, and deep integrations; you want to focus on business outcomes, not infrastructure; you operate in a regulated industry.

- Typical team size: 1 project manager plus vendor support

- Time to production: 2-8 weeks (managed); 1-4 weeks (self-serve)

- Ongoing maintenance: Low — vendor handles infrastructure and often assists with optimization

Decision Matrix

| Factor | Build (API-First) | No-Code Platform | Full-Stack / Managed |

|---|---|---|---|

| Engineering team required | Yes (3-5 devs) | No | No (or minimal) |

| Time to deploy | 4-12 weeks | 1-3 weeks | 2-8 weeks |

| Customization depth | Maximum | Limited | Moderate to high |

| Monthly cost (1K calls/mo) | $500-$2,000 | $100-$500 | $1,000-$5,000 |

| Monthly cost (50K calls/mo) | $5,000-$15,000 | $3,000-$10,000 | $8,000-$30,000 |

| Maintenance burden | High | Low | Very low |

| Compliance readiness | You build it | Variable | Typically included |

| Scalability risk | You manage it | Platform handles | Platform handles |

Total Cost of Ownership (TCO) Comparison — 12-Month View

For a business handling 20,000 calls per month with an average call duration of 3 minutes:

| Cost Component | Build (API-First) | No-Code Platform | Full-Stack Managed |

|---|---|---|---|

| Platform/API fees | $18,000-$36,000 | $12,000-$30,000 | $48,000-$120,000 |

| Engineering (build) | $50,000-$100,000 | $0 | $0 |

| Engineering (maintain) | $40,000-$80,000 | $5,000-$10,000 | $0-$5,000 |

| Telephony | $6,000-$12,000 | Often included | Included |

| LLM API costs | $12,000-$36,000 | Often included | Included |

| 12-month TCO | $126,000-$264,000 | $17,000-$40,000 | $48,000-$125,000 |

The no-code approach is cheapest but limits customization. Building delivers the most control but costs the most when accounting for engineering time. Full-stack solutions sit in between, with the premium going toward compliance, reliability, and vendor-managed optimization.

When Custom Makes Sense vs. When It Is Wasteful

Build custom when:

- Voice AI is your core product (you are building a voice AI company)

- You need proprietary models trained on your specific domain data

- Your use case is genuinely novel and no existing platform supports it

- You have a team that will maintain the system for years

Use a platform when:

- Voice AI is an operational tool, not your core product

- Your use case is well-established (support, scheduling, lead qualification)

- You need compliance certifications you cannot build yourself (HIPAA, SOC 2)

- Speed to market matters more than maximum customization

7. AI Voice Agent Use Cases by Industry

AI voice agents are being deployed across every major industry, but the specific use cases, compliance requirements, and ROI drivers differ significantly. Below are the industries where we see the strongest adoption, with concrete examples and measurable outcomes.

Healthcare

Healthcare represents one of the highest-value applications for AI voice agents, driven by the sheer volume of routine calls (appointment scheduling, prescription refills, insurance verification) and the high cost of clinical staff time.

Primary use cases:

- Appointment scheduling and rescheduling (40-60% of all inbound healthcare calls)

- Patient follow-up and post-discharge check-ins

- Prescription refill requests and pharmacy routing

- Insurance verification and pre-authorization status

- Appointment reminders and no-show reduction

Case Study — Large Multi-Specialty Clinic:

Challenge: A 200-physician multi-specialty practice was handling 15,000 inbound calls per week, with 35% of callers abandoning due to hold times averaging 8 minutes. Staff turnover in the call center exceeded 45% annually.

Solution: Deployed an AI voice agent for appointment scheduling, rescheduling, and basic triage routing, integrated with Epic EHR via HL7 FHIR APIs.

Result: Call abandonment dropped from 35% to 8%. Average hold time reduced from 8 minutes to under 30 seconds. The agent autonomously handled 72% of scheduling calls. Estimated annual savings of $1.2 million in staffing costs. Patient satisfaction (CSAT) scores for phone interactions increased from 3.2 to 4.1 on a 5-point scale.

Compliance note: Healthcare voice agents must operate under a signed HIPAA Business Associate Agreement (BAA), encrypt all PHI in transit and at rest, and provide audit logging. Platforms like SuperMIA and PolyAI offer HIPAA-compliant configurations specifically designed for healthcare deployments.

Hospitality

Hotels, resorts, and restaurant groups handle massive volumes of repetitive calls — reservation inquiries, room service orders, amenity questions, and concierge requests. AI voice agents excel here because the conversations are structured, high-frequency, and directly tied to revenue.

Primary use cases:

- Room reservations and modification (check-in/check-out dates, room type changes)

- Concierge services (restaurant recommendations, local attractions, transportation)

- Room service and amenity requests

- Loyalty program inquiries

- Post-stay follow-up and review solicitation

- Revenue-generating upsells (room upgrades, spa packages, dining reservations)

Case Study — Boutique Hotel Group (12 Properties):

Challenge: Front desk staff spent 3+ hours daily answering repetitive phone calls, diverting attention from in-person guest service. After-hours calls (30% of total volume) went to voicemail, and 60% of those callers never called back.

Solution: Implemented a voice AI agent handling reservations, amenity questions, and basic concierge requests across all 12 properties, with live-agent escalation for complex requests.

Result: 68% of inbound calls resolved without human intervention. After-hours booking capture increased by 40%, generating an estimated $180,000 in additional annual revenue. Guest satisfaction scores remained stable (no negative impact from AI interaction). Front desk staff reported significantly improved ability to focus on in-person guests.

Real Estate

Real estate businesses live and die by lead response time. Studies consistently show that responding to a lead inquiry within 5 minutes is 21 times more effective than responding within 30 minutes (Harvard Business Review). AI voice agents provide instant response at any hour.

Primary use cases:

- Inbound lead qualification (budget, timeline, location preferences, financing status)

- Property information delivery (price, features, availability, neighborhood details)

- Tour scheduling and confirmation

- Outbound follow-up with leads who submitted web forms

- Post-tour feedback collection

Case Study — Regional Real Estate Brokerage:

Challenge: A 50-agent brokerage received 800+ web leads per month but only contacted 40% within the first hour. Leads contacted after 24 hours converted at one-fifth the rate of those contacted within 5 minutes.

Solution: Deployed an AI voice agent to instantly call every web lead, qualify interest, answer property questions from the MLS database, and schedule tours directly on agents' calendars.

Result: Lead contact rate within 5 minutes jumped from 12% to 95%. Tour scheduling rate increased by 35%. Agents reported spending 60% more time on high-value activities (showings, negotiations) versus phone qualification. Estimated 28% increase in closed transactions attributable to faster lead response.

E-Commerce

E-commerce companies deal with enormous volumes of repetitive post-purchase calls — "Where's my order?", "How do I return this?", "Can I change my shipping address?" AI voice agents handle these high-frequency, low-complexity calls exceptionally well.

Primary use cases:

- Order status and tracking updates

- Return and exchange initiation

- Shipping address changes

- Product availability inquiries

- Warranty and product information

- Refund status checks

Case Study — D2C Fashion Brand (500K Orders/Year):

Challenge: Customer service team of 25 agents handled 12,000 calls per month, with 55% being order-status inquiries that required agents to simply read tracking information from the OMS.

Solution: Implemented an AI voice agent integrated with Shopify and ShipStation to handle order status, tracking, return initiation, and basic product questions.

Result: AI agent resolved 78% of order-status calls without escalation. Human agents were able to focus on complex issues (damaged items, complaints, VIP customers). Average call wait time dropped from 6 minutes to under 15 seconds. Customer service costs per order decreased by 42%.

Education

Educational institutions — from universities to online learning platforms — manage high volumes of enrollment, registration, financial aid, and student support inquiries, particularly during peak periods (enrollment windows, financial aid deadlines, start-of-term).

Primary use cases:

- Enrollment and admissions inquiries

- Course registration and scheduling

- Financial aid status and documentation requirements

- Campus information and event details

- Technical support for learning platforms

- Student feedback and surveys

Case Study — Online University (40,000 Students):

Challenge: During enrollment periods, the admissions call center experienced 300% volume surges, resulting in 25-minute average wait times and an estimated 15% loss in prospective student conversions.

Solution: Deployed an AI voice agent to handle initial admissions inquiries, program information, application status checks, and appointment scheduling with admissions counselors.

Result: Peak-period wait times decreased from 25 minutes to 2 minutes. Enrollment counselors focused on high-intent prospects rather than informational inquiries. Prospective student contact rate increased by 45%. Estimated 8% improvement in enrollment conversion.

Financial Services

Financial institutions face the dual challenge of high call volumes and strict regulatory requirements. AI voice agents in this sector must be accurate, compliant, and capable of seamless escalation to human agents for sensitive transactions.

Primary use cases:

- Account balance and transaction inquiries

- Fraud alert verification and card freeze/unfreeze

- Payment scheduling and loan information

- Branch location and hours

- Card activation and PIN resets

- Insurance claim status

Case Study — Regional Credit Union (200K Members):

Challenge: Call center handled 45,000 calls monthly with 60% being routine balance checks and transaction inquiries. After-hours calls represented 25% of volume but were routed to an outsourced center with poor satisfaction scores.

Solution: Implemented an AI voice agent with secure member authentication (voice biometrics + knowledge-based verification) to handle account inquiries, payment scheduling, and basic service requests 24/7.

Result: 65% of routine inquiries resolved by AI without escalation. After-hours member satisfaction increased from 2.8 to 4.2 out of 5. Annual outsourced call center costs reduced by $380,000. Zero security incidents in first 12 months of operation.

8. The Real Costs: Pricing Models and Hidden Fees

AI voice agent pricing is one of the most confusing areas in the market. Advertised rates rarely reflect the true cost of operation. This section breaks down every pricing model, exposes common hidden costs, and provides a framework for calculating your actual total spend.

Pricing Models Explained

Per-Minute Pricing:

The most common model. You pay a rate for each minute of call time. Rates typically range from $0.07 to $0.50 per minute depending on the platform, features, and volume tier.

- Low end ($0.07-$0.12/min): Retell AI, Vapi (base rate), Bland AI. Often excludes LLM API costs, premium voices, or telephony fees.

- Mid range ($0.12-$0.25/min): Most platforms with inclusive pricing (STT + LLM + TTS + telephony bundled).

- High end ($0.25-$0.50/min): Enterprise platforms with managed services, compliance features, and premium support (PolyAI, Ada.cx, Cognigy effective rates).

Per-Call Pricing:

Some platforms charge per call rather than per minute. This can be advantageous if your average call duration is long (over 5 minutes) but expensive for short calls.

Subscription / Seat-Based Pricing:

Platforms like Dialpad and Voiceflow charge monthly per user or per editor, with usage limits. This model is predictable but can become expensive as teams grow or call volumes spike.

Pay-Per-Task Pricing:

A model where you pay for completed business outcomes (appointments booked, leads qualified, orders processed) rather than raw call minutes. SuperMIA uses this model, and it aligns costs directly with business value. If the agent answers a call but the caller hangs up before a task is completed, you do not pay. This can be significantly cheaper for high-volume deployments where many calls are short or non-actionable.

Hidden Costs to Watch For

Based on our analysis, these are the costs that most frequently surprise buyers:

1. Telephony Fees ($0.01-$0.04/min):

Many platforms quote AI processing costs but exclude the cost of the actual phone call. Twilio charges approximately $0.013/min for inbound and $0.014/min for outbound in the US. This adds up: at 20,000 calls/month averaging 3 minutes each, telephony alone costs $780-$2,400/month.

2. LLM API Costs ($0.005-$0.03/min):

If the platform passes through LLM costs (common with Vapi, Retell, and other API-first platforms), you pay for every token processed. A typical 3-minute call generates 500-1,500 tokens of input/output, costing $0.01-$0.05 per call at GPT-4o rates.

3. Premium Voice Charges ($0.01-$0.05/min):

Many platforms offer basic voices for free but charge extra for high-quality voices (especially ElevenLabs-powered). This premium can add $0.02-$0.05 per minute.

4. Overage Penalties (20-100% markup):

Platforms with tiered subscriptions (Synthflow, Voiceflow) often charge significantly higher rates for minutes that exceed your plan limit. A platform charging $0.10/min in-plan may charge $0.15-$0.20/min for overage.

5. Integration and Setup Fees ($500-$25,000):

Some enterprise platforms charge one-time setup fees, custom integration development costs, or professional services fees for implementation.

6. Number Provisioning ($1-$5/month per number):

If you need dedicated phone numbers (local, toll-free, international), each number carries a monthly fee plus per-minute usage charges.

7. Storage and Compliance Costs ($100-$500/month):

Call recording storage, HIPAA-compliant infrastructure, and data retention in specific regions can add recurring costs.

ROI Calculation Framework

To calculate the ROI of an AI voice agent deployment, use this framework:

Annual Costs Without AI Voice Agent:

- (A) Human agent fully-loaded salary: $45,000-$65,000/year

- (B) Number of agents handling routine calls: _____

- (C) Total routine call staffing cost: A x B = _____

- (D) Recruiting and training cost per agent (with ~30-40% turnover): $5,000-$8,000/agent/year

- (E) Infrastructure and management overhead: 20-30% of C

Annual Costs With AI Voice Agent:

- (F) Platform costs (monthly fee x 12): _____

- (G) Remaining human agents for complex calls and escalations: _____

- (H) Human agent costs for remaining staff: A x G = _____

- (I) Implementation and optimization costs: _____

ROI = ((C + D + E) - (F + H + I)) / (F + H + I) x 100

Example: A business with 8 human agents ($55,000 each) deploys an AI voice agent that handles 70% of calls. They reduce to 3 human agents for escalations and complex cases.

- Before: $440,000 (salaries) + $40,000 (turnover) + $110,000 (overhead) = $590,000/year

- After: $72,000 (platform) + $165,000 (3 agents) + $15,000 (implementation) = $252,000/year

- Annual savings: $338,000 | ROI: 234%

TCO Comparison Table (20,000 Calls/Month, 3-Min Average)

| Cost Component | Low-Cost Platform | Mid-Range Platform | Enterprise Managed |

|---|---|---|---|

| Base AI processing | $8,400/yr | $21,600/yr | $36,000/yr |

| Telephony | $9,360/yr | Included | Included |

| LLM API passthrough | $7,200/yr | Included | Included |

| Premium voice | $4,320/yr | $4,320/yr | Included |

| Overages (estimated) | $3,600/yr | $1,200/yr | $0 |

| Support/SLA | $0 | $6,000/yr | Included |

| Compliance features | $2,400/yr | $4,800/yr | Included |

| Annual Total | $35,280 | $37,920 | $36,000 |

| Effective $/min | $0.049 | $0.053 | $0.050 |

Note: At high volumes, the effective per-minute cost across platform tiers converges. The differentiation is in features, reliability, and support — not price. Pay-per-task models like SuperMIA's can further reduce effective costs when a significant percentage of calls are short or do not result in completed tasks.

9. When AI Voice Agents Fail: Honest Limitations

No technology guide is complete without an honest assessment of limitations. AI voice agents have improved dramatically, but they still fail in predictable ways. Understanding these failure modes helps you design better systems, set realistic expectations, and know when human agents remain essential.

Complex Emotional Conversations

AI voice agents struggle with conversations that require genuine emotional intelligence — a grieving customer, an angry caller who needs to feel heard, or a sensitive situation requiring judgment and empathy. Current LLMs can simulate empathetic language, but callers often perceive it as hollow. In these situations, human agents outperform AI by a significant margin in satisfaction scores.

Mitigation: Implement robust sentiment detection that triggers automatic escalation to human agents when emotional distress or anger is detected. Design the AI to acknowledge emotion explicitly ("I understand this is frustrating") and offer human transfer proactively rather than continuing to attempt resolution.

Heavy Accent Handling

Despite improvements in ASR technology, speech recognition accuracy drops measurably for speakers with heavy accents, non-native speakers, and regional dialects. Best-in-class systems achieve 5-8% WER for standard American English but 15-25% WER for heavily accented speech — a 3x degradation that causes frequent misunderstandings.

Mitigation: Choose platforms with strong multilingual STT models (Deepgram and AssemblyAI lead here); implement confirmation loops ("Just to confirm, you said...") for critical information like names, addresses, and account numbers; allow callers to spell out information; offer text-based alternatives (SMS follow-up) when voice comprehension struggles.

Multi-Party Conversations

Current AI voice agents are designed for one-on-one conversations. When multiple people speak simultaneously — a couple calling about a shared account, a parent and child on the same line, or a conference-style call — the STT engine cannot reliably separate or attribute speakers, leading to confused transcripts and nonsensical responses.

Mitigation: This is a fundamental architectural limitation. Detect multi-speaker scenarios and escalate to human agents. Some platforms offer basic speaker diarization, but it is not reliable enough for real-time conversation management.

Noisy Environments

Callers in loud environments — construction sites, busy streets, airports, cars with open windows — produce audio that degrades STT accuracy significantly. Background noise can increase WER by 10-30 percentage points depending on noise type and intensity.

Mitigation: Use STT engines with noise-suppression preprocessing (Deepgram's Nova-2 handles noise better than most); implement automatic audio quality detection that adjusts the confirmation threshold (more confirmation loops when audio quality is poor); provide a fallback to text-based interaction (SMS or web) when audio quality is consistently below threshold.

Edge Cases and Hallucination Risks

LLMs can generate plausible-sounding but incorrect information, especially when asked about topics outside their knowledge base or when the knowledge base contains ambiguous information. In a voice context, hallucinations are particularly dangerous because callers cannot easily verify information and may act on incorrect data.

Mitigation: Use RAG (retrieval-augmented generation) with strict grounding — configure the LLM to only answer based on retrieved documents; implement "I don't know" fallback responses rather than allowing the LLM to guess; use guardrails to prevent the agent from providing medical, legal, or financial advice outside its training; log and review calls regularly for hallucination detection.

When Human Agents Are Still Better

AI voice agents are not a universal replacement for human agents. Humans remain superior for:

- Negotiations requiring dynamic strategy adjustment

- Conversations requiring legal or regulatory judgment

- High-stakes complaints where the caller expects to speak with a person

- Situations requiring physical world awareness (verifying a caller's physical situation)

- Building long-term relationship rapport (sales account management, VIP customer retention)

The optimal deployment is hybrid: AI handles the routine 70-80% of calls, and human agents focus on the complex 20-30% where they add genuine value.

10. Implementation Roadmap: From Zero to Live in 30 Days

A typical AI voice agent deployment follows a four-week implementation cycle. This roadmap assumes a mid-complexity deployment — standard integrations, moderate call volume, and no unusual compliance requirements. Complex deployments (healthcare with EHR integration, financial services with core banking) may require 8-12 weeks.

Week 1: Requirements and Vendor Selection

Days 1-2: Define Requirements

- Document your top 5 call types by volume (these should represent 70-80% of all calls)

- Identify required integrations (CRM, calendar, order management, knowledge base)

- Determine compliance requirements (HIPAA, TCPA, GDPR, PCI DSS)

- Set target metrics: containment rate, latency threshold, CSAT target

- Establish budget range and preferred pricing model

Days 3-4: Evaluate Vendors

- Request demos or trials from 2-3 shortlisted platforms

- Place test calls to each platform's demo agents

- Review documentation quality and integration guides

- Verify compliance certifications (request SOC 2 report, HIPAA BAA)

- Check reference customers in your industry

Day 5: Select Vendor and Kick Off

- Sign agreement and complete onboarding

- Assign internal project lead and stakeholders

- Schedule weekly check-in cadence with vendor

Week 2: Setup, Integration, and Knowledge Base

Days 6-7: Platform Configuration

- Set up account, provision phone numbers

- Select voice (or configure custom voice)

- Configure basic call flow for your top use case

- Connect telephony (SIP trunking or platform-provided numbers)

Days 8-9: Integration Development

- Connect CRM integration (Salesforce, HubSpot, etc.)

- Connect calendar integration (Google Calendar, Calendly, etc.)

- Set up webhook endpoints for custom actions

- Configure data mappings between voice agent and backend systems

Day 10: Knowledge Base and Training

- Upload FAQs, product documentation, and policy documents

- Configure RAG retrieval settings

- Write and test conversation prompts for top 5 call types

- Define escalation triggers and human handoff procedures

Week 3: Testing, QA, and Edge Case Handling

Days 11-13: Internal Testing

- Place 50-100 test calls covering all documented scenarios

- Test edge cases: hang-ups, long silences, interruptions, nonsensical input

- Verify integration accuracy (do appointments appear in the calendar? Do CRM records update?)

- Measure latency under realistic conditions

- Test escalation to human agents

Days 14-15: Refinement

- Review all test call transcripts and recordings

- Identify failure patterns and update prompts or flows accordingly

- Adjust voice settings (speed, tone, pause duration)

- Refine escalation triggers based on testing results

- Add missing knowledge base content identified during testing

Week 4: Soft Launch, Monitoring, and Optimization

Days 16-17: Soft Launch

- Route 10-20% of live call traffic to the AI agent

- Monitor calls in real-time for the first 4-8 hours

- Have human agents on standby for immediate escalation

Days 18-19: Scale Up

- Increase to 50% of call traffic if soft launch metrics are acceptable

- Continue daily transcript review and prompt refinement

- Address any integration issues that surface under real-world conditions

Day 20: Full Deployment

- Route 100% of applicable calls to the AI agent

- Maintain human escalation path

- Set up ongoing monitoring dashboards and alerting

Post-Launch: Ongoing Optimization

First 30 Days Post-Launch:

- Review transcripts for the lowest-rated calls weekly

- Refine prompts based on real conversation patterns

- Expand to additional call types once core types are performing well

- Monitor and optimize for emerging failure patterns

Ongoing:

- Monthly performance review (containment rate, CSAT, latency trends)

- Quarterly knowledge base refresh

- A/B test prompt variations to improve task completion rates

- Stay current with platform updates and new capabilities

11. Compliance and Security Guide

Compliance is not optional — it is a business requirement that can determine whether an AI voice agent deployment is viable in your industry. This section covers the major regulatory frameworks that affect AI voice agents.

HIPAA (Healthcare)

The Health Insurance Portability and Accountability Act applies to any AI voice agent that handles Protected Health Information (PHI) — patient names, medical record numbers, appointment details, diagnoses, or treatment information.

Requirements for AI voice agents:

- Signed Business Associate Agreement (BAA) with the voice agent platform vendor

- Encryption of all PHI in transit (TLS 1.2+) and at rest (AES-256)

- Access controls and audit logging for all PHI access

- Data retention and deletion policies

- Breach notification procedures

What to verify: Request the vendor's BAA before any testing with real patient data. Confirm that call recordings, transcripts, and conversation logs are stored in HIPAA-compliant infrastructure. Verify that the vendor's sub-processors (STT, LLM, TTS providers) are also covered under the BAA chain.

TCPA (Telemarketing — US)

The Telephone Consumer Protection Act governs outbound calls and has severe penalties ($500-$1,500 per violation). AI voice agents making outbound calls must comply rigorously.

Requirements:

- Prior express written consent before making automated outbound calls to mobile phones

- Compliance with the National Do-Not-Call Registry

- Calling hour restrictions (8 AM - 9 PM in the recipient's time zone)

- Identification disclosure (the AI must identify who is calling and provide a callback number)

- Opt-out mechanism on every call

What to verify: Ensure the platform maintains do-not-call list integration, enforces calling hour restrictions automatically, and logs consent records. The AI agent must clearly identify itself as automated at the beginning of outbound calls (some states require explicit disclosure that the caller is not human).

GDPR (Europe)

The General Data Protection Regulation applies to any AI voice agent handling data of EU residents, regardless of where the business is located.

Requirements:

- Lawful basis for processing (typically consent or legitimate interest)

- Data minimization — only collect and process data necessary for the stated purpose

- Right to access, correction, and deletion of personal data

- Data Processing Agreement (DPA) with the vendor

- Data transfer mechanisms for processing outside the EU (Standard Contractual Clauses)

- Privacy notice informing callers about AI processing

What to verify: Confirm that the vendor offers EU data residency (data stored and processed within the EU). Verify that transcripts and recordings can be deleted upon request. Ensure the vendor has signed Standard Contractual Clauses if data is processed outside the EU.

SOC 2 Type II

SOC 2 Type II is an auditing standard that verifies a vendor's security controls over a sustained period (typically 6-12 months). It is the baseline security certification expected by enterprise buyers.

What it covers: Security, availability, processing integrity, confidentiality, and privacy controls.

What to verify: Request the vendor's SOC 2 Type II report (not just Type I, which only verifies control design at a point in time). Review any noted exceptions. Confirm the report was issued within the last 12 months.

PCI DSS (Payment Handling)

If your AI voice agent handles credit card numbers or payment information during calls, PCI DSS compliance is required.

Requirements:

- Never record or store full credit card numbers in call recordings or transcripts

- Use secure payment processing (DTMF masking or secure handoff to payment processor)

- Encrypt all cardholder data in transit and at rest

- Regular security assessments and penetration testing

What to verify: Most AI voice agent platforms recommend pausing recording during payment collection and using DTMF (keypad) input for card numbers rather than spoken digits. Verify that the platform supports this workflow.

Call Recording Laws

Call recording laws vary significantly by jurisdiction and directly affect AI voice agent deployments:

- One-party consent (US federal, ~38 US states): Only one party (the AI agent itself counts) needs to consent to recording.

- Two-party / all-party consent (~12 US states including California, Illinois, Pennsylvania): All parties must be informed and consent to recording.

- EU (GDPR): Caller must be informed, and recording must have a lawful basis.

Practical implementation: Best practice is to include a brief disclosure at the beginning of every call: "This call may be recorded for quality and training purposes." This covers two-party consent requirements in most jurisdictions.

12. The Future of AI Voice Agents (2026-2030)

The AI voice agent market is evolving rapidly. Here are the five trends we expect to define the next four years based on current technology trajectories, investment patterns, and early-stage research.

Multimodal Agents (Voice + Vision + Screen)

By 2027-2028, AI voice agents will not be limited to audio-only interactions. Multimodal agents will be able to guide callers through visual tasks — "I can see your screen; let me walk you through changing your password" — by combining voice conversation with screen sharing, camera input, or augmented reality overlays. Early implementations of this already exist in video-based customer support, and the convergence with voice agents is a matter of engineering, not fundamental research.

Real-Time Emotion Detection and Adaptive Response

Current voice agents detect sentiment from word choice, but next-generation systems will analyze vocal biomarkers — pitch variation, speech rate, pause patterns, vocal stress — to detect emotions in real time. This enables adaptive behavior: slowing down and using softer language for stressed callers, matching energy with enthusiastic callers, and escalating proactively when frustration is detected before the caller asks for a manager.

Real-Time Translation and Cross-Lingual Conversations

By 2027, we expect production-grade AI voice agents to handle conversations where the caller speaks one language and the agent responds in another, with real-time translation happening transparently. This is already technically possible (combining STT in language A, translation, and TTS in language B), but current latency makes it impractical. Advances in end-to-end multimodal models will reduce translation latency to imperceptible levels.

Proactive Outbound Engagement

Today's voice agents are primarily reactive — they wait for the phone to ring. Future agents will initiate context-aware outreach: calling a customer whose subscription is about to renew with a personalized update, reaching out to a patient who missed a medication refill, or alerting a traveler about a flight delay with rebooking options. The shift from reactive to proactive will significantly increase the ROI of voice agent deployments but requires careful attention to consent management and TCPA compliance.

Agent-to-Agent Communication

As AI agents become more prevalent, a growing percentage of phone interactions will be between two AI agents — one representing a business and another representing a consumer. A patient's personal AI assistant might call a clinic's scheduling agent to negotiate an appointment time that works with the patient's calendar. This machine-to-machine voice communication will require new protocols and standards for AI-to-AI negotiation, authentication, and data exchange.

13. FAQ — Frequently Asked Questions

What is an AI voice agent?

An AI voice agent is an autonomous software system that conducts real-time, two-way telephone conversations using artificial intelligence. It combines speech-to-text, large language models, and text-to-speech technologies to understand callers, process their requests, and respond with natural-sounding speech — all without human intervention. Unlike traditional IVR systems, AI voice agents handle open-ended, dynamic conversations.

How much does an AI voice agent cost?

AI voice agent costs range from $0.07 to $0.50 per minute depending on the platform, features, and volume. A typical mid-market deployment handling 10,000 calls per month at 3 minutes average costs $2,100-$15,000 per month. However, hidden costs (telephony, LLM APIs, premium voices, overages) can inflate the advertised price by 40-60%. Some platforms like SuperMIA use pay-per-task pricing tied to completed actions rather than call duration.

Can AI voice agents replace human agents?