Table of Contents

- The 9-Day Problem That Is Quietly Killing Your Pipeline

- What Is AI Candidate Screening?

- Why the 80% Claim Is Not Marketing

- How AI Candidate Screening Actually Works

- Where AI Screening Goes Wrong (and Nobody Wants to Talk About It)

- Where AI Screening Actually Pays Off

- AI Screening vs. the Alternatives

- The Time-to-Hire Gap, Visualized

- Real-World Case Study: Softqube + SuperMIA IntelliHire

- The Three Objections You Will Hear in the Buying Committee

- How SuperMIA IntelliHire Closes the 48-Hour Window

- Frequently Asked Questions

- Stop Losing Hires in the First 48 Hours

Quick Answer

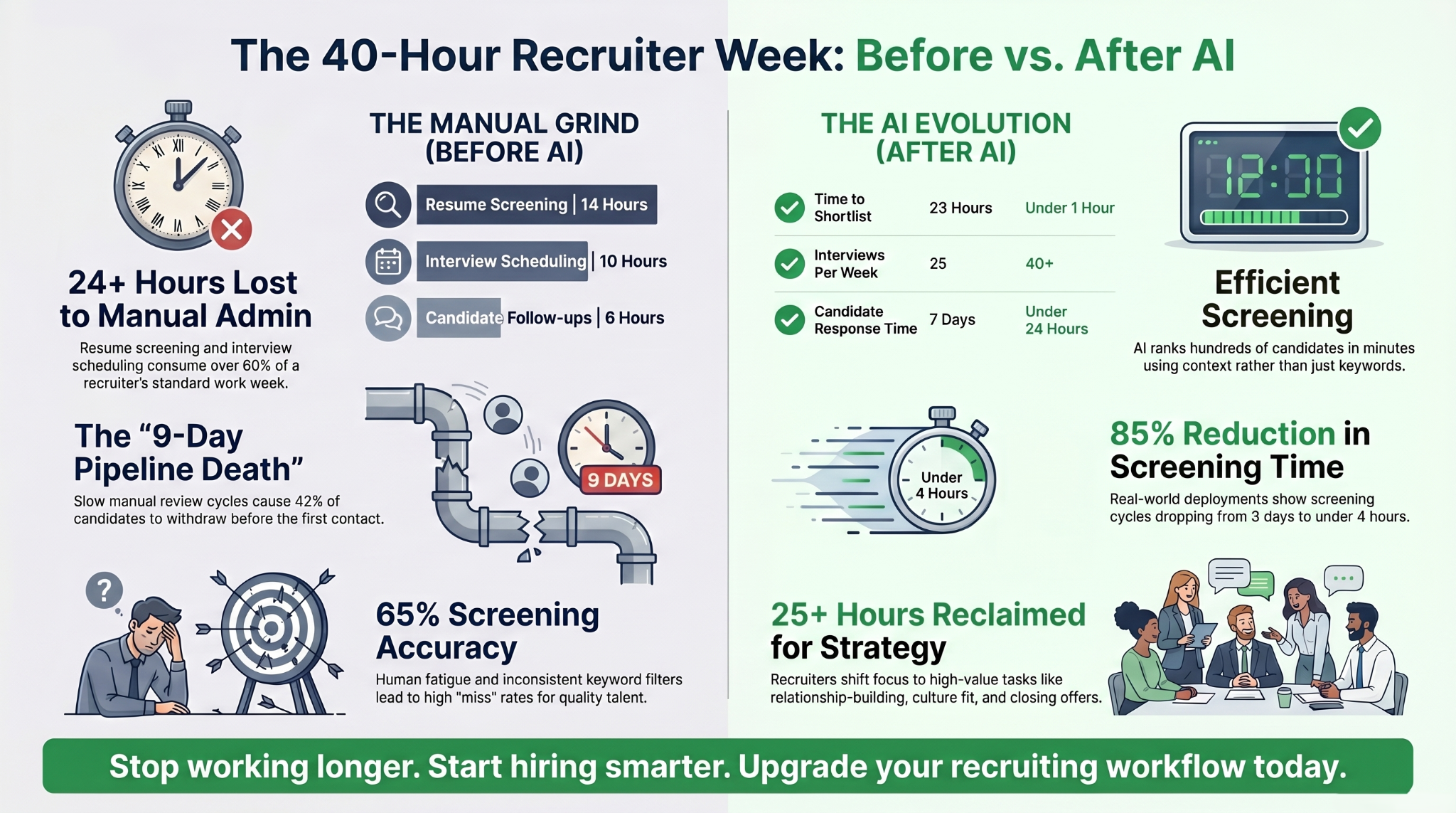

Manual screening averages 23 hours per hire; AI cuts this to under 1 hour with 85–95% accuracy. The global time-to-hire is 44 days; companies using AI screening land at 25 days, top quartile at 10. AI does not replace recruiters — 93% of hiring managers still want humans in the loop — but it eliminates the 23 hours of admin that prevent recruiters from moving fast enough.

The 9-Day Problem That Is Quietly Killing Your Pipeline

Your careers page got 350 applications for one role last week. Your hiring manager has 40 open minutes on Thursday. By the time the shortlist lands, the best candidate has already accepted a competing offer. You did not lose them on comp. You lost them on speed.

This is the reality of hiring in 2026. Applicants are up, recruiter headcount is flat, and top candidates leave the market in about 10 days. Meanwhile, 42% of candidates quietly withdraw because nobody got back to them fast enough. The bottleneck is not sourcing. It is the first 48 hours after someone applies.

"Speed kills. Not in the fast and furious way — in the 'you waited 9 days to review 3 resumes and now the best one accepted somewhere else' way."

LinkedIn's 2025 Global Talent Trends report found top candidates leave the active market in an average of 10 days — consistent with what practitioners on the ground have been observing for years.

This guide walks through how AI candidate screening closes that 48-hour window — the real numbers, the failure modes, and a full before/after from a live SuperMIA deployment.

TL;DR

- Manual screening averages 23 hours per hire; AI cuts this to under 1 hour with 85–95% accuracy.

- The global time-to-hire is 44 days; companies using AI screening land at 25 days, top quartile at 10.

- Real deployment: SuperMIA IntelliHire cut one client's resume screening from 2–3 days to under 4 hours, with 80% of initial screening fully automated.

- AI does not replace recruiters. 93% of hiring managers still want humans in the loop — especially for final calls.

- Legal exposure is real — NYC Local Law 144, the EU AI Act, and the iTutorGroup EEOC settlement (US$365K) all say the same thing: audit, disclose, keep a human in the loop.

See It in Action → Book a SuperMIA IntelliHire Demo

What Is AI Candidate Screening?

AI candidate screening is software that uses machine learning and natural language processing to read resumes and applications, score each candidate against the role, rank them by predicted fit, and hand recruiters a ranked shortlist in minutes instead of days. It replaces the keyword-match approach of legacy ATS filters with contextual understanding — so a résumé that says "built internal tools" can be correctly matched to a job posting for "full-stack engineer."

Modern systems operate at the top of the funnel. They do not make the hire. They do the part that is volume-dependent, repetitive, and — if we are honest — where most human screening is least accurate.

Key Takeaways

- AI screening handles hundreds of resumes per second; a human manages 10–20 a day at best.

- Accuracy jumps from ~65% (manual) to ~90% (AI) because fatigue and bias drop out.

- Time-to-shortlist falls by 75% on average across published deployments.

- The risk is not that AI screens poorly — it is that un-audited AI screens unfairly.

- Done right, AI frees recruiters for the parts hiring actually turns on: relationships, closing, and culture fit.

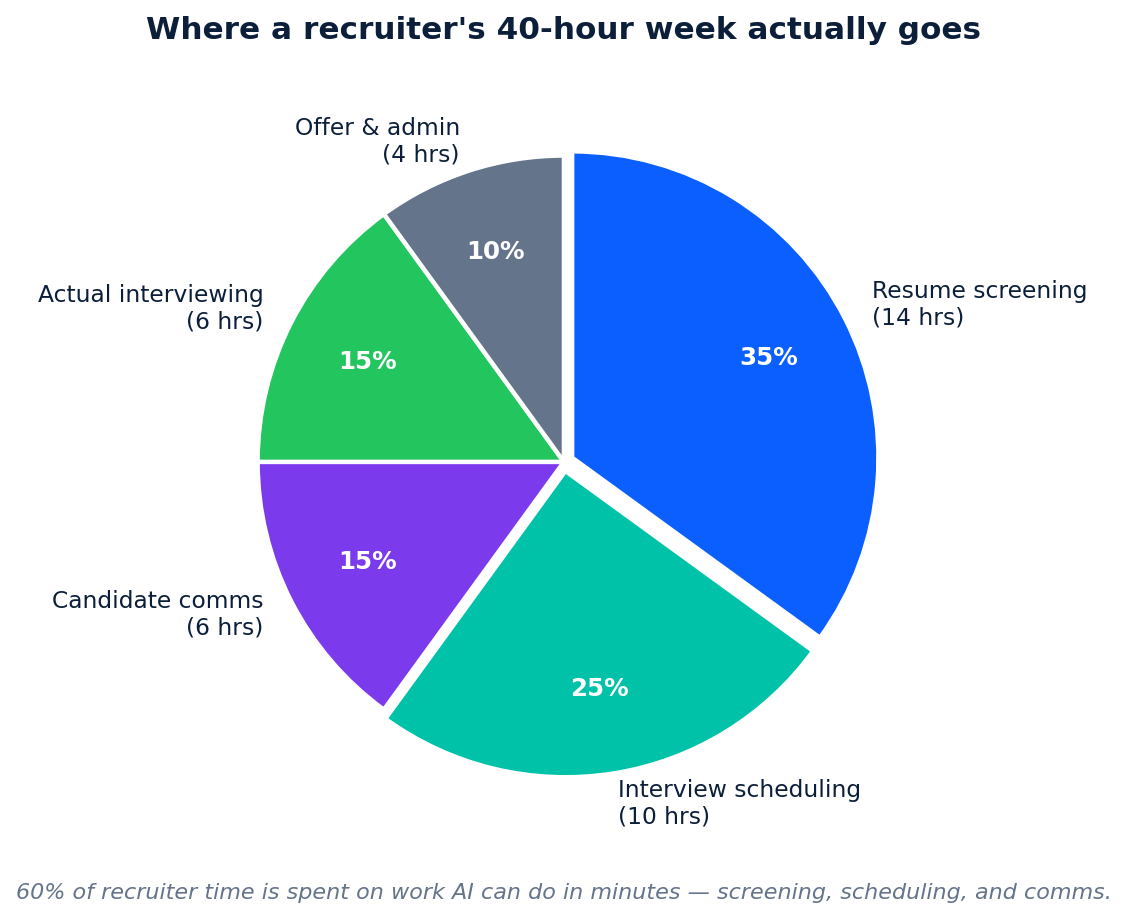

Why the 80% Claim Is Not Marketing

The headline number comes from basic arithmetic, not a vendor deck. Here is the math.

A senior recruiter spends about 14 hours a week on resume screening and another 10 hours on interview scheduling — based on recruiter time studies published by Workable and SSR. That is 24 of 40 hours on work that does not require human judgment. Layer in 6 hours of candidate chasing and you are at 30.

Now run the substitution. AI screens 100+ resumes in under an hour. AI schedules via two-way calendar sync in seconds. AI sends the "we received your application" note and the first qualification question instantly, not three days later. You do not get the 80% by replacing a recruiter. You get it by deleting the 23 hours of admin inside their week.

The upstream effect is what actually matters. When recruiters stop doing resume triage, they pick up the phone sooner, and candidate response times collapse from 7 days to under 24 hours. Paradox's client data and LinkedIn's 2025 Future of Recruiting report both put the lift at around 9% higher quality-of-hire, just from speed.

How AI Candidate Screening Actually Works

Any AI screening workflow that will survive your compliance review has four stages. Skip any one and you end up with a faster version of your current mess.

Step 1 — Parse and Enrich

The system ingests the resume (PDF, DOCX, LinkedIn URL, career-site form), extracts structured fields, and enriches missing signal from public profiles. Modern parsers hit 94% accuracy on field extraction, so you stop losing candidates to formatting.

Step 2 — Score Against the Job

A ranking model scores each candidate across hard skills, experience depth, trajectory, and role alignment. The output is a 0–100 fit score with per-criterion breakdown — not a binary pass/fail. Each dimension is weighted using your own historical hiring outcomes, not a generic model.

Step 3 — First Conversation, Automated

The top 20–30% get an instant, natural-sounding voice or chat conversation: 4–6 structured qualifying questions, knockout checks, and scheduling. This is where speed-to-lead lives. For hourly or high-volume roles, the candidate is booked into a calendar slot before they close the tab.

Step 4 — Explainable Handoff to the Recruiter

The recruiter opens a shortlist with a one-page summary per candidate: score, strongest criteria, weakest criteria, full transcript of the AI conversation, and flagged risks. No black box. If you cannot explain why a candidate scored where they did, do not ship that system.

→ Book a 15-Minute SuperMIA IntelliHire Walkthrough

Where AI Screening Goes Wrong (and Nobody Wants to Talk About It)

Every blog on this topic sells the upside. We are going to name the failure modes, because you will get asked about them in the compliance review.

1. Training Data That Encodes the Last Five Years of Bias

The canonical warning: Amazon shelved an internal recruiting tool in 2018 because it systematically penalized resumes that mentioned "women's." The model had learned from a decade of male-skewed hiring. If your AI trains on your own historic outcomes and your historic outcomes are biased, the AI scales the bias.

2. Opaque Scoring That Breaks Under EEOC Scrutiny

In 2023, iTutorGroup paid a US$365,000 EEOC settlement after its AI allegedly rejected older applicants — women over 55 and men over 60 — automatically. There was no way for the company to explain the scores to the regulator. Any screening system you buy in 2026 must produce per-candidate reasoning that a compliance officer can read.

3. Candidate Distrust That Costs You the Pipeline

Pew Research found 66% of US adults will not apply to a job that uses AI in hiring decisions. That does not mean you cannot use it — it means you must disclose it, use it only at the top of the funnel, and keep a human on the final decision. Do that and 67% of candidates are comfortable with AI screening (Glassdoor, 2024).

The Legal Map in 2026

NYC Local Law 144 requires annual bias audits for automated employment decision tools. Illinois and Maryland have consent laws for AI video interviews. The EU AI Act classifies hiring AI as 'high-risk' with disclosure, audit, and human-oversight requirements phasing in through 2027. Emotion-recognition AI in hiring has been banned in the EU since February 2025. Ship nothing you cannot audit.

Where AI Screening Actually Pays Off

AI screening is not a universal upgrade. It shines in four specific situations. Outside of these, stay manual — or stay hybrid.

High-Volume Enterprise Hiring

If you are a retailer, hospitality chain, BPO, or any business hiring 500+ hourly workers a quarter, AI screening is no longer optional. Paradox's Olivia — deployed by Unilever, FedEx, and McDonald's — runs end-to-end screening in under 48 hours where the old process took 5–7 days. Unilever alone saved 50,000+ interview hours and over £1M a year, with measurable diversity gains on top.

Technical and Engineering Roles

Resumes for software, data, and ML roles are the hardest to evaluate manually — titles are inconsistent, stacks change quarterly, and hiring managers are expensive to involve at the top of the funnel. Semantic-match AI (not keyword match) now outperforms hiring managers on initial technical screen by roughly 60% on recall (Second Talent, 2025).

Campus and Graduate Programs

Graduate programs commonly see 5,000+ applications for 50 slots. Before AI, the screening decision was "who went to the top 20 schools." That is now both illegal in several jurisdictions and empirically weak at predicting performance. AI with blind screening cuts gender bias by 54% and lifts underrepresented-minority shortlisting by 35%.

Contact Centers and Customer Support

Voice-based AI screens candidates on the actual signal that predicts performance: how they hold a conversation. The AI runs the first 4-minute qualification call, flags tone and language proficiency, and books qualified candidates directly. Retail and hospitality deployments (Harri, GoodTime) report 20–35% drops in candidate no-show rates.

AI Screening vs. the Alternatives

| Factor | Manual Review | Keyword ATS | Offshore / Outsourced | SuperMIA IntelliHire |

|---|---|---|---|---|

| Time to shortlist (100 resumes) | 6–8 hours | ~5 minutes (crude) | 4–6 hours | Under 60 minutes |

| Accuracy vs. role fit | 60–70% | 40–50% | 65–75% | 89–94% |

| Consistency across reviewers | Varies by time of day | Consistent but crude | Training-dependent | Consistent and nuanced |

| Explainable score per candidate | In the recruiter's head | None | Partial (notes) | Per-criterion breakdown |

| Bias-audit ready | No | Limited | No | Yes (NYC LL 144, EU AI Act) |

| Interview scheduling included | No | No | Partial | Yes, two-way calendar sync |

| Cost for 200 hires/year | ~US$380K (recruiter load) | ~US$18K | ~US$140K | ~US$36K–60K |

Cost figures use SHRM average cost-per-hire (US$4,700) and AdAI 2026 recruiter time benchmarks. SuperMIA pricing varies by volume and integrations; typical mid-market range shown.

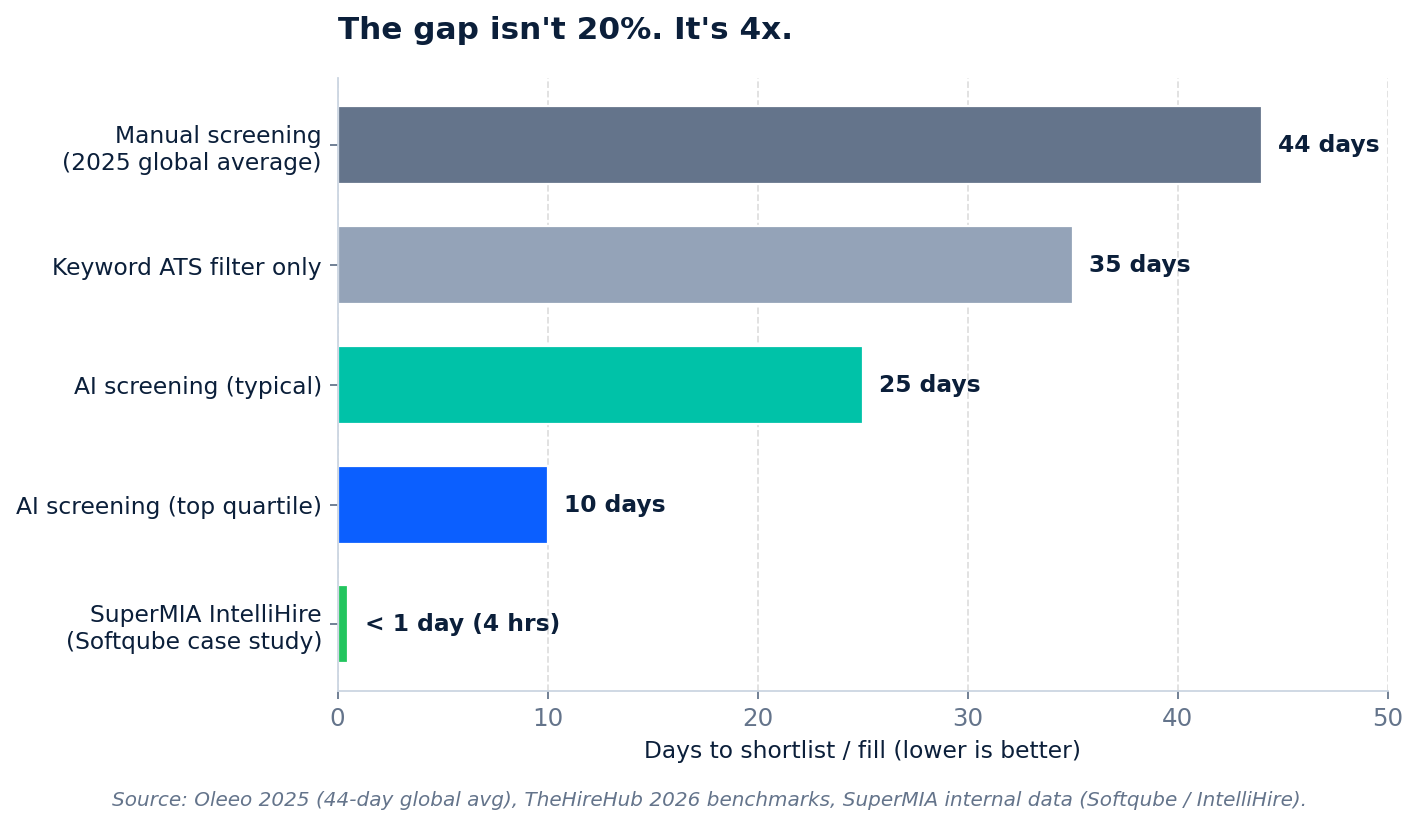

The Time-to-Hire Gap, Visualized

The gap between screening methods is not incremental. It is structural. A manual-only team and an AI-augmented team are not running the same race — they are running different races.

The 44-day number is the published 2025 global average from Oleeo. The 10-day top-quartile benchmark comes from published deployments at Unilever, FedEx, and other enterprise-scale AI screening programs. The "under 1 day" SuperMIA figure is the real resume-screening cycle time Softqube saw after deploying IntelliHire — which is the next section.

Real-World Case Study: Softqube + SuperMIA IntelliHire

Softqube Technologies is a 120-person software services firm hiring across frontend, backend, QA, and DevOps roles. Before deploying IntelliHire, their HR team was running 100% manual screening. Two hires a week was a good week. Their pain looked like every other fast-growing services firm's pain: too many resumes, not enough hours, inconsistent screening across recruiters.

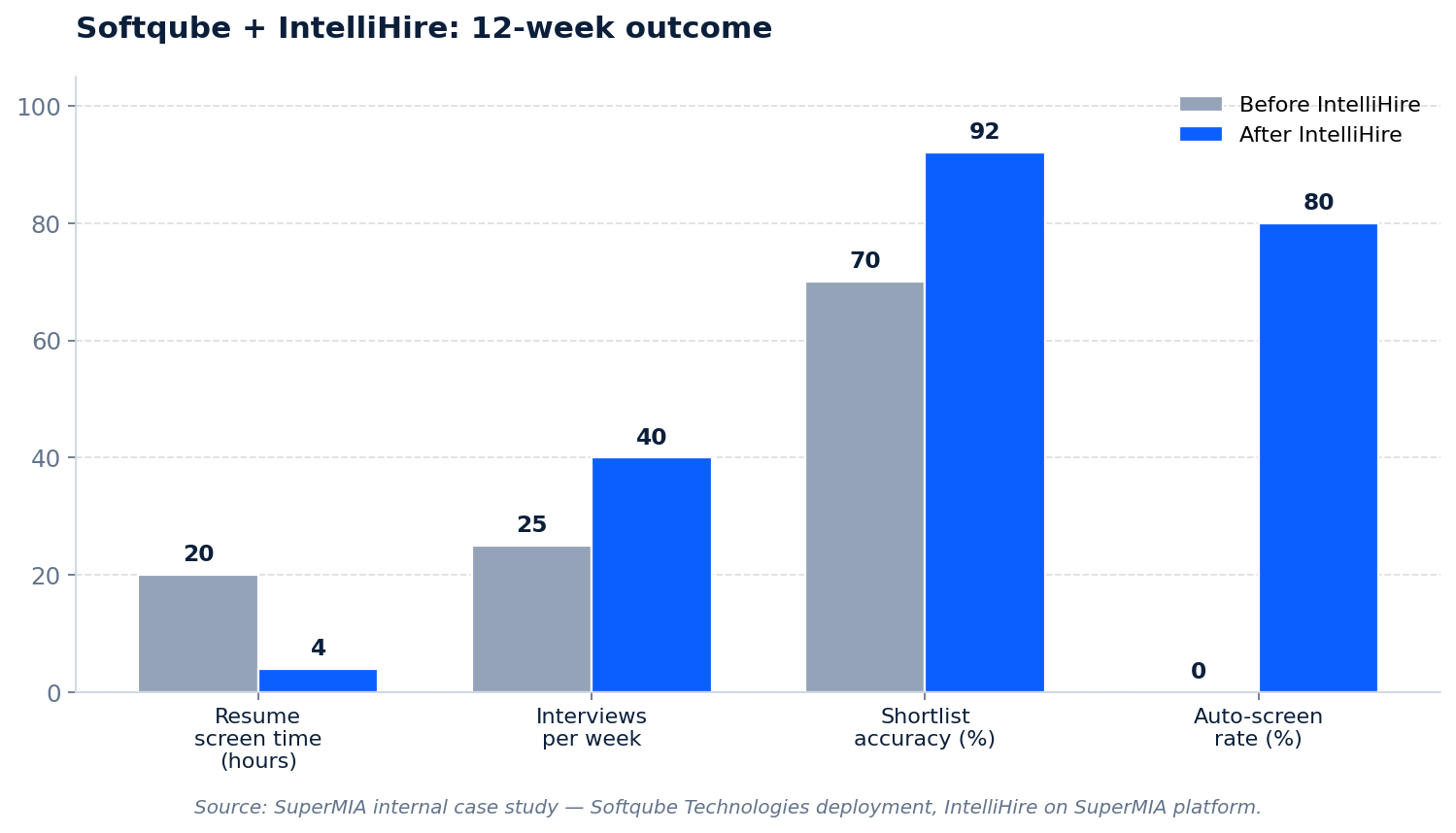

What Changed in 12 Weeks

IntelliHire — the interview agent built on SuperMIA — took over resume parsing, fit scoring, and the first-round video interview. Recruiters still owned final calls, culture fit, and the offer conversation.

The Numbers That Moved

- Resume screening time: 2–3 days → under 4 hours (≈85% reduction)

- Interviews per week: 25 → 40+ (60% lift in throughput)

- Shortlist accuracy: 70% → 92% (measured by hiring-manager approval rate)

- Candidate reach: +19% across LinkedIn and Indeed

- Recruiter satisfaction: 45 → 58.4 on internal NPS

- Auto-screened share: 0% → 80% — humans now handle only the edge cases

The telling metric is the recruiter NPS. Nothing breaks a hiring program faster than recruiter burnout. A 13-point NPS gain in 12 weeks is not an automation story — it is a retention story.

Full case study: supermia.ai/use-cases/softqube-intellihire-ai-interview-agent-case-study/

Screen 50 Candidates in 10 Minutes — Book a Demo

The Three Objections You Will Hear in the Buying Committee

"AI Will Miss Unique Candidates"

Valid concern. Un-tuned models do miss nonlinear profiles — the liberal-arts grad turned PM, the self-taught engineer with no degree. The fix is not "use less AI," it is "use AI that you can tune." Add criteria weighting, disable degree requirements, enable skills-first mode, and review the false-negative report weekly for the first 60 days.

"Candidates Will Feel Like a Number"

They already feel like a number when a human takes 9 days to reply. 79% of candidates say transparency is what they want — not the absence of AI. Disclose that AI is used at the top of the funnel, explain what it evaluates, and show how a human takes over after the shortlist. Candidate completion rates hold above 89% when the process is transparent (Willo, 2026).

"How Do We Trust the Score?"

Same way you trust any recruiter — by auditing the output. A modern AI screening system produces a per-candidate score breakdown, a transcript, and an audit log. Sample 20 rejections a month and check them against a human review. If disagreement is above 15%, the model needs retuning. If it is under 5%, you have more signal than most human-only teams.

How SuperMIA IntelliHire Closes the 48-Hour Window

IntelliHire is the agent you deploy once and stop thinking about. It runs inside SuperMIA's enterprise agentic AI platform, which means it inherits the compliance, audit, and integration stack — not a bolted-on point tool.

- Reads every resume and ranks it in under 60 seconds using context, not keywords — so the "built internal tools" engineer does not get filtered out

- Runs a structured first-round video interview with questions you design, scored consistently across every candidate

- Books qualified candidates directly into your hiring manager's calendar — no back-and-forth

- Pushes every transcript, score, and audit log to your ATS (Workable, Greenhouse, Lever, BambooHR, or your internal system)

- Stays inside your compliance boundary — bias-audit ready for NYC LL 144 and EU AI Act, with demographic-parity monitoring built in

Explore IntelliHire and the SuperMIA platform.

Build Your AI Screening System — Book a Demo →

Frequently Asked Questions

Stop Losing Hires in the First 48 Hours

You do not lose candidates because your job is boring or your brand is weak. You lose them because by the time your shortlist is ready, someone else already sent theirs. Nine days of manual screening is a decision — a decision to let the pipeline die in triage.

AI candidate screening, done honestly and audited properly, closes the 48-hour window. Recruiters get their week back. Hiring managers see shortlists on day one. Candidates get an answer before they forget they applied. And the compliance officer gets a clean audit log.

The math works. The case study is real. What is left is the decision to deploy it.

Screen 50 Candidates in 10 Minutes → Book a SuperMIA IntelliHire Demo

Harikrishna Patel

Harikrishna Patel is the founder of MIA – My Intelligent Assistant, the AI automation platform built under Botfinity Inc. in Dallas, Texas. With 15+ years in software engineering, AI/ML, and enterprise solution design, he focuses on creating practical, scalable AI tools that help businesses automate support, workflows, and operations through voice and chat.